OpenShell

Run any agent more safely. Shape its access not its capabilities, and help keep inference private.

The Runtime for Autonomous Agents

Modern agents need the autonomy to code, research, and evolve—but they shouldn't have unrestricted access to your host system. OpenShell applies the isolation principles of a web browser to the agentic workflow. Every session is sandboxed, every resource is metered, and every permission is verified by the runtime before execution.

- Unified Governance: Manage coding agents, research assistants, and AI workflows under one policy layer.

- Host Agnostic: Run identical security profiles across any operating system.

- Self-Evolving Safety: Enable agents to learn new skills and install packages without risking system integrity.

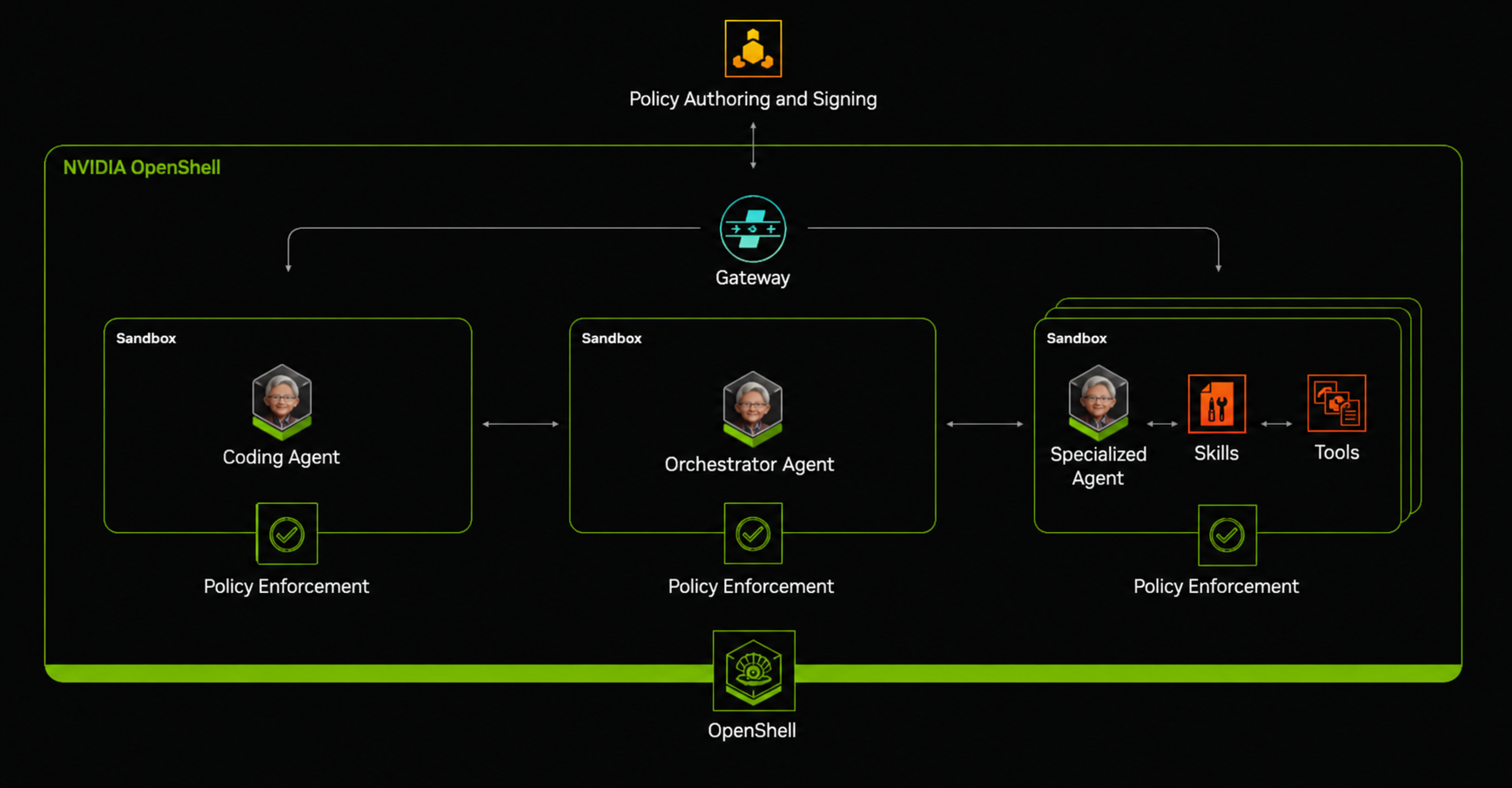

The OpenShell Architecture

OpenShell is built on three foundational pillars that bridge the gap between agentic autonomy and enterprise compliance.

OpenShell is built on three foundational pillars that bridge the gap between agentic autonomy and enterprise compliance.

1. Programmable, Individual Sandboxes

Purpose-built isolation for autonomous agents.

OpenShell creates individual, isolated sandboxes for each agent and sub-agent designed for AI that modifies its own environment. They handle skill verification and network isolation, providing a "break-safe" environment where agents can experiment without touching the host. OpenShell handles live policy updates to grant developer approvals in real-time and keeps the full audit trail, so every 'allow' and 'deny' decision is logged for forensic-level oversight.

2. Granular Policy Enforcement Engine

Control the "What," "Where," and "How" of execution.

The engine evaluates actions at the binary, path, and method levels. It allows agents to be autonomous where it matters—like installing a verified skill—while blocking unreviewed binaries or unauthorized network calls. OpenShell’s enforcement engine performs constraint reasoning: If an agent hits a wall, it can reason about the roadblock and propose a policy update for your final approval. The policy enforcement runs across the filesystem, network, and process layers.

3. Gateway

Keep sensitive data private.

The gateway is the control point where autonomous agent actions are evaluated before they reach the host environment. It translates policy rules into runtime enforcement decisions, governing what an agent can read, write, execute, access over the network, or delegate to external tools. By centralizing policy management, the gateway gives operators a clear place to define trust boundaries, audit decisions, and adapt permissions as an agent’s task evolves, while preserving the isolation guarantees that make OpenShell safe for long-running autonomous work.